Redis VS Memcached 2018

9 min read

Redis VS Memcached

This post is about performance between Redis VS Memcached, which are in-memory, networked object cache software.

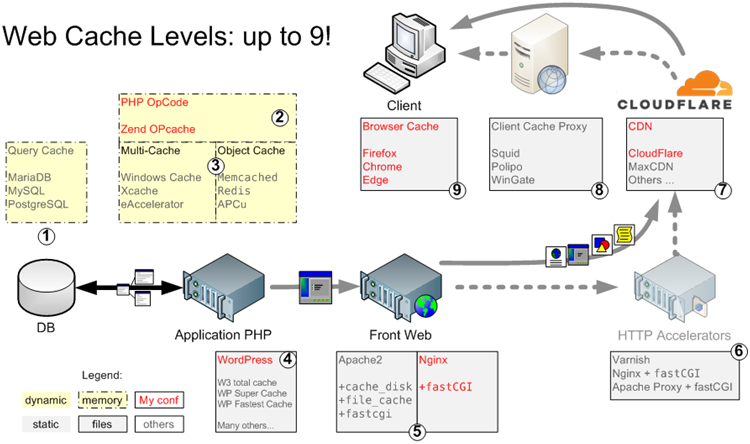

There are 3 categories of caching systems on the server side: Memory code caching, Memory object caching, and Disk file caching. Objects can be anything though, including files. By file I mean any file: the generated HTML code that makes a page, a CSS, an image…

They are in the 3rd layer on the map below:

More details about other Caching systems here.

Memory Object Caching

Redis, APCu, Memcached, are advanced in-memory caching systems. They can cache anything, since objects can be anything.

- Redis is often use for object caching, because it’s a kind of optimized mysql and you can use it to process the long queries instead of mysql.

- APCu is a stripped version of APC, with only memory caching system

- Memcached is an old memory caching system, as fast as Redis but with less options

- Apache only offers memory cache for SSL sessions with socache_*.so

Currently, Redis is the most popular, distributed and powerful solution. Memcached offers less options, and APCu is local to each PHP server, therefore instances cannot share content.

What Are Objects in WordPress?

A CMS like WordPress is a good example to work with. What do WordPress cache as objects? WordPress codex gives a list of functions your code can call to cache objects, but don’t say what objects are. WordPress developer wiki gives a partial answer:

The WordPress Object Cache is used to save on trips to the database. The Object Cache stores all of the cache data to memory and makes the cache contents available by using a key, which is used to name and later retrieve the cache contents.

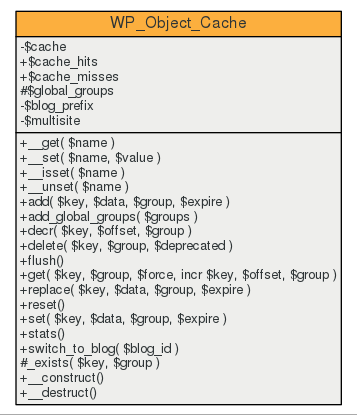

A Stackexchange later, you have a better answer. Using the stat() method of the WP_Object_Cache structure outputs what’s in an object:

Cache Hits: 110 Cache Misses: 98 Group: options - ( 81.03k ) Group: default - ( 0.03k ) Group: users - ( 0.41k ) Group: userlogins - ( 0.03k ) Group: useremail - ( 0.04k ) Group: userslugs - ( 0.03k ) Group: user_meta - ( 3.92k ) Group: posts - ( 1.99k ) Group: terms - ( 1.76k ) Group: post_tag_relationships - ( 0.04k ) Group: category_relationships - ( 0.03k ) Group: post_format_relationships - ( 0.02k ) Group: post_meta - ( 0.36k )

As you can see, all these data come from queries to the database and are now stored as key/pair values in the Object Cache system. It can be the fully generated page, author name, post ID, etc. It can be Anything.

Other CMS will have a different structure for the Object Cache structure but same principles apply. The ultimate goal being to save your code from issuing SQL queries to the database.

I won’t discuss the relevance of memory object caching, this is an entirely different subject.

Object Cache Systems: Redis vs Memcached

Memcached can only do a small fraction of the things Redis can do.

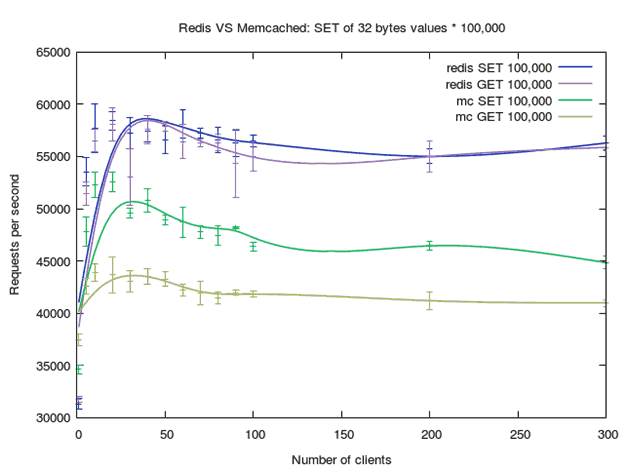

Redis is more powerful, more popular, and better supported than memcached. Redis is better even where their features overlap, as shown on these load tests.

Features comparison

Both tools are powerful, fast, in-memory, third party data stores that are useful as a cache. Both could potentially help speed up your application by caching database results, HTML fragments, or anything else that might be expensive to generate. It’s indeed not true for database results but let’s assume it’s possible.

- Read/write speed: Both are extremely fast. It’s in memory caching, no clear winner.

- Memory usage: Both store only what is needed.

- Memory management: Redis is better.

- Redis reclaims unused memory and can be flushed. Memcached reserve an amount of memory that cannot be reclaimed.

- Redis never use more than what it’s set for, Memcached goes beyond (needs citations)

- Disk I/O dumping: A clear win for Redis since it does this by default and has very configurable persistence. Memcached has no mechanisms for dumping to disk without 3rd party tools.

- Scaling: Both are distributed memory object caching systems. Redis includes tools to help you go beyond that such as real clustering while Memcached does not.

More Features For Redis

Memcached is a simple volatile cache server. It allows you to store key/value pairs where the value is limited to being a string up to 1MB. When you restart memcached your data is gone.

Redis can act as a cache as well. It can store key/value pairs too. In Redis they can even be up to 512MB. When you restart Redis your data is still there. You can also turn off persistence.

Redis is more than a cache. It is an in-memory, nosql data structure server. More info on the LRU and LFU (Least Frequently Used) eviction policy here and here is a Stackexchange thorough comparison which I’m not going to steal like some bloggers do.

Both require a software implementation. Objects, strings and template files are not going to cache themselves auto-magically. You cannot just write any PHP code and hope it will get cached by Redis or Memcached. I only heard of one solution called Predis, that can be interfaced between PHP and the web server, allowing some objects to be detected as cacheable.

Performance Load Test

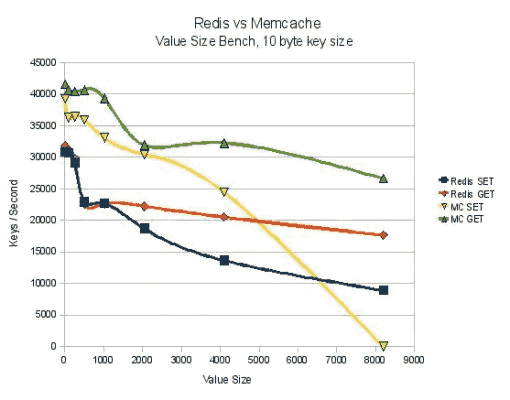

I thought at first that load testing was useless until I found this 2010 post: it clearly showed that Redis was falling behind Memcached in term of performances. Below a result of SET/GET benchmark by key size:

Indeed Redis can hold bigger keys than Memcached, but also not every application need to handle objects data bigger than 1MB. Only Cloud file hosting applications such as Owncloud does, actually. Therefore, Memcached was still competitive in 2010.

Unfortunately, there is 2 problems with this test:

- this test was done in 2010 and the versions of both software were Redis 2.0.0 rc4 and Memcached 1.4.5 (release). Today’s versions available for Redis are 3.2 (stable) and 4.0 (RC), and 1.58 for Memcached. Without reading the changelog, there’s certainly a lot of improvement on both sides!

- this test was rigged because the guy developed his own benchmark relying on some outdated redis and memcached client libraries. Also, it’s impossible to reproduce it with today’s versions. The library he used for Redis bench (libcredis) is depredacted.

Then I found a second bench example that didn’t rely on self-developed C code that I cannot compile with today’s tools. This 2010 test produced mitigated results in favor of Redis this time. Same problem with this test, the versions of Redis and Memcached are from… 2010!

Thus, I decided to reproduce the load test with today’s versions.

Server Configuration

- OS (AWS EC2 t2.micro instance): Ubuntu 16.04.4 LTS 4.4.0-1050-aws x86_64

- vCPU: Intel(R) Xeon(R) CPU E5-2676 v3 @ 2.40GHz (1 core only)

- file system: SSD disks (314MB/s read speed for small files)

- Redis server v=3.0.6 sha=00000000:0 malloc=jemalloc-3.6.0 bits=64

- Memcached 1.4.25-2ubuntu1.4 amd64

System monitoring: Netdata 1.9.1, collects every 2 seconds 1,792 metrics.

- Redis benchmark tool: redis-benchmark

- Memcached benchmark tool: mc-benchmark

mc-benchmark is a port from redis-benchmark to the memcached protocol, developed by the guy who posted in 2010 the second bench example I reproduced. I am more confident in this protocol as I have access to the code. As shown in his post, both bench software are the same. The only thing that change is the access protocol. Therefore, we compare apples to apples.

Load Test Script and Tools

Dependencies

The mean and standard deviation are calculated by a tool called datamash.

You need redis-server and redis-tools packages, including the benchmark tool and the client tool.

You also need the gnuplot5 package (144MB) to generate the graphs, but you can use another tool if you like.

You also need to download and install mc-benchmark and compile it:

make

cc -c -std=c99 -pedantic -O2 -Wall -W -g -rdynamic -ggdb ae.c cc -c -std=c99 -pedantic -O2 -Wall -W -g -rdynamic -ggdb anet.c cc -c -std=c99 -pedantic -O2 -Wall -W -g -rdynamic -ggdb mc-benchmark.c cc -c -std=c99 -pedantic -O2 -Wall -W -g -rdynamic -ggdb sds.c cc -c -std=c99 -pedantic -O2 -Wall -W -g -rdynamic -ggdb adlist.c cc -c -std=c99 -pedantic -O2 -Wall -W -g -rdynamic -ggdb zmalloc.c cc -o mc-benchmark -std=c99 -pedantic -O2 -Wall -W -lm -g -rdynamic -ggdb ae.o anet.o mc-benchmark.o sds.o adlist.o zmalloc.o

Load Test Bash Script

#!/bin/bash

# source for this method: http://oldblog.antirez.com/post/redis-memcached-benchmark.html

# redis bench: https://redis.io/topics/benchmarks

# memcached bench: https://github.com/antirez/mc-benchmark

FLUSH[1]="redis-cli flushall"

# FLUSH[2]="echo 'flush_all' | nc 127.0.0.1 11211"

FLUSH[2]="sudo service memcached restart"

BIN[1]="redis-benchmark -t SET,GET"

BIN[2]="/usr/src/stress-test/mc-benchmark/mc-benchmark"

CLIENTS="1 5 10 20 30 40 50 60 70 80 90 100 200 300"

payload=32

iterations=100000

keyspace=100000

loop=3

RAWFILESET[1]="/tmp/redis-benchmark-set-${iterations}_$(date +"%Y%m%d.%H%M%S").txt"

RAWFILEGET[1]="/tmp/redis-benchmark-get-${iterations}_$(date +"%Y%m%d.%H%M%S").txt"

RAWFILESET[2]="/tmp/mc-benchmark-set-${iterations}_$(date +"%Y%m%d.%H%M%S").txt"

RAWFILEGET[2]="/tmp/mc-benchmark-get-${iterations}_$(date +"%Y%m%d.%H%M%S").txt"

echo ${RAWFILESET[@]}

echo ${RAWFILEGET[@]}

for i in $(seq 1 ${#BIN[@]}); do

echo "ready to start benchmark of ${BIN[$i]} - Press any key"

read x

eval ${FLUSH[$i]}

for clients in $CLIENTS; do

for n in $(seq 1 $loop); do

# echo $BIN -n $iterations -r $keyspace -d $payload -c $clients -q

SETGET[$n]=$(${BIN[$i]} -n $iterations -r $keyspace -d $payload -c $clients -q | sed "s/.*\r//g" | egrep -o "[[:digit:]]+\.[[:digit:]]+")

done

# https://www.gnu.org/software/datamash/

echo $clients $(echo ${SETGET[@]} | xargs -n2 | awk '{print $1}' | datamash mean 1 pstdev 1) | tee -a ${RAWFILESET[$i]}

echo $clients $(echo ${SETGET[@]} | xargs -n2 | awk '{print $2}' | datamash mean 1 pstdev 1) | tee -a ${RAWFILEGET[$i]}

done

done

echo ${RAWFILESET[@]}

echo ${RAWFILEGET[@]}

This is a script and as such, it is free to redistribute and modify.

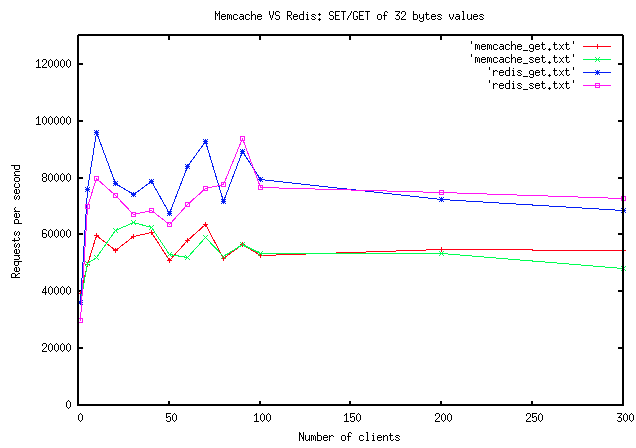

This script will bench SET and GET of 100,000 keys of random 32 bytes in both software, with a growing number of clients, 3 times each. The output files will contain 3 columns:

- number of clients

- mean of requests per second

- standard deviation

Gnuplot5 Script

The data files generated are processed by gnuplot5 to output nice png graphs with standard errors. Below is the gnuplot code to create the statistic graphs with Y error bars:

set terminal pngcairo transparent enhanced font "arial,10" fontscale 1.0 size 640, 480

set style data lines

# http://www.gnuplotting.org/multiple-lines-with-different-colors/

set style line 1 lc rgb '#0025ad' lt 1 lw 1 # --- blue

set style line 2 lc rgb '#0025ad' lt 1 lw 1.5 # --- blue

set style line 3 lc rgb '#9060ad' lt 1 lw 1 # --- blue

set style line 4 lc rgb '#9060ad' lt 1 lw 1.5 # --- blue

set style line 5 lc rgb '#00ad4e' lt 1 lw 1 # .

set style line 6 lc rgb '#00ad4e' lt 1 lw 1.5 # .

set style line 7 lc rgb '#90ad4e' lt 1 lw 1 # .

set style line 8 lc rgb '#99ad4e' lt 1 lw 1.5 # .

set xlabel "Number of clients"

set ylabel "Requests per second"

set title "Redis VS Memcached: SET/GET of 32 bytes values * 10,000"

set output '/data/mydomain/public/gnuplot/redis-benchmark-setget-10000_20180528.181155.png'

plot "/tmp/redis-benchmark-set_20180528.181155.txt" using 1:2:3 with errorbars ls 1 notitle "redis SET 10,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 2 title "redis SET 10,000",\

"/tmp/redis-benchmark-get_20180528.181155.txt" using 1:2:3 with errorbars ls 3 notitle "redis GET 10,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 4 title "redis GET 10,000",\

"/tmp/mc-benchmark-set_20180528.181155.txt" using 1:2:3 with errorbars ls 5 notitle "mc SET 10,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 6 title "mc SET 10,000",\

"/tmp/mc-benchmark-get_20180528.181155.txt" using 1:2:3 with errorbars ls 7 notitle "mc GET 10,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 8 title "mc GET 10,000"

set title "Redis VS Memcached: SET/GET of 32 bytes values * 100,000"

set output '/data/mydomain/public/gnuplot/redis-benchmark-setget-100000_20180528.181425.png'

plot "/tmp/redis-benchmark-set-100000_20180528.181425.txt" using 1:2:3 with errorbars ls 1 notitle "redis SET 100,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 2 title "redis SET 100,000",\

"/tmp/redis-benchmark-get-100000_20180528.181425.txt" using 1:2:3 with errorbars ls 3 notitle "redis GET 100,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 4 title "redis GET 100,000",\

"/tmp/mc-benchmark-set-100000_20180528.181425.txt" using 1:2:3 with errorbars ls 5 notitle "mc SET 100,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 6 title "mc SET 100,000",\

"/tmp/mc-benchmark-get-100000_20180528.181425.txt" using 1:2:3 with errorbars ls 7 notitle "mc GET 100,000",\

"" using 1:2:($2/($3*1.e5)) smooth acsplines ls 8 title "mc GET 100,000"

Load Test Results

Raw Performance Comparison

As expected, Memcached is way behind Redis, for small 32 bytes sized keys:

It’s interesting to note that Memcached was faster at creating keys than retrieving them! I hope to get some time to compare by key size in the future…

Netdata stats

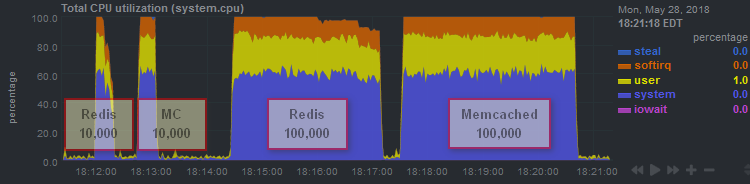

Both Memcached and Redis seem to be only limited by the CPU.

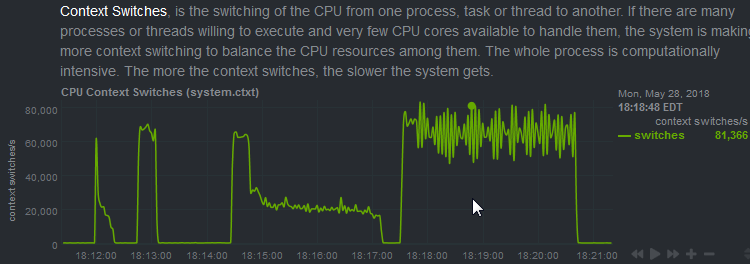

One reason why Memcached is behind could be the way it’s developed: it looks like it’s using too many threads?

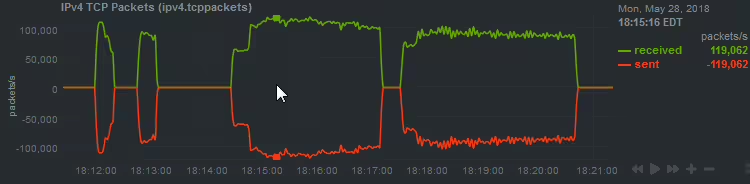

The test took slightly longer for Memcached and Redis seems to be able to ingest more packets per second.

Clear Winner: Redis

Since 2010, Redis got even faster. Back in 2010, Memcached couldn’t use all the CPU available for the same tests. On the VM I ran the test, it did, and took longer to complete than Redis. Therefore, it uses more Wats per queries than Redis. At least for this limited set of tests.

Whether you use WordPress or a custom development, Redis is the way to go for object caching. It’s well maintained, secure, free, and it benefits from a large community of users.

This test is pretty ridiculous. You put Memcache (a multi-threaded service) on a single core AWS instance meant only for bursting, then compared it to a single-threaded service (Redis) and act surprised that somehow the tool without multi-threading does better?

How about you do this test on an m5d.24xlarge and see how the two stack up.

Just lend me your access codes my friend 😛 m5d.24xlarge is worth $2 to $5 per hour…

I was also thinking the same while reading it. While he also mentioned that as the speed was being limited by the cpu only. This means that on a multicore processor, memcached would perform better than this result (not claiming it to perform better than redis, as haven’t checked). However this test is flawed as per overall multithreading not being used for memcached.